Introduction

- 1.1 What Is Computer Security?

- 1.2 Threats

- 1.3 Harm

- 1.4 Vulnerabilities

- 1.5 Controls

- 1.6 Conclusion

- 1.7 What's Next?

- 1.8 Exercises

In this chapter:

Threats, vulnerabilities, and controls

Confidentiality, integrity, and availability

Attackers and attack types; method, opportunity, and motive

Valuing assets

Beep Beep Beep [the sound pattern of the U.S. government Emergency Alert System]

Civil authorities in your area have reported that the bodies of the dead are rising from their graves and attacking the living. Follow the messages on screen that will be updated as information becomes available.

Do not attempt to approach or apprehend these bodies as they are considered extremely dangerous. This warning applies to all areas receiving this broadcast.

Beep Beep Beep

FIGURE 1.1 Emergency Broadcast Warning

On 11 February 2013, residents of Great Falls, Montana, received the preceding warning on their televisions [INF13].

The warning signal sounded authentic; it used the distinctive tone people recognize for warnings of serious emergencies such as hazardous weather or a natural disaster. And the text was displayed across a live broadcast television program. But the content of the message sounded suspicious.

What would you have done?

Only four people contacted police for assurance that the warning was indeed a hoax. As you can well imagine, however, a different message could have caused thousands of people to jam the highways trying to escape. (On 30 October 1938, Orson Welles performed a radio broadcast adaptation of the H.G. Wells novel War of the Worlds that did cause a minor panic. Some listeners believed that Martians had landed and were wreaking havoc in New Jersey. And as these people rushed to tell others, the panic quickly spread.)

The perpetrator of the 2013 hoax was never caught, nor has it become clear exactly how it was done. Likely someone was able to access the system that feeds emergency broadcasts to local radio and television stations. In other words, a hacker probably broke into a computer system.

On 28 February 2017, hackers accessed the emergency equipment of WZZY in Winchester, Indiana, and played the same “zombies and dead bodies” message from the 11 February 2013 incident. Three years later, four fictitious alerts were broadcast via cable to residents of Port Townsend, Washington, between 20 February and 2 March 2020.

In August 2022, the U.S. Department of Homeland Security (DHS), which administers the Integrated Public Alert and Warning System (IPAWS), warned states and localities to ensure the security of devices connected to the system, in advance of a presentation at the DEF CON hacking conference that month. Later that month at DEF CON, participant Ken Pyle presented the results of his investigation of emergency alert system devices since 2019. Although he reported the vulnerabilities he found at the time to DHS, the U.S. Federal Bureau of Investigation (FBI), and the manufacturer, he claimed the vulnerabilities had not been addressed, years later. Equipment manufacturer Digital Alert Systems in August 2022 issued an alert to its customers reminding them to apply the patches it released in 2019. Pyle noted that these patches do not fully address the vulnerabilities because some customers use early product models that do not support the patches [KRE22].

Today, many of our emergency systems involve computers in some way. Indeed, you encounter computers daily in countless situations, often in cases in which you are scarcely aware a computer is involved, like delivering drinking water from the reservoir to your home. Computers also move money, control airplanes, monitor health, lock doors, play music, heat buildings, regulate heartbeats, deploy airbags, tally votes, direct communications, regulate traffic, and do hundreds of other things that affect lives, health, finances, and well-being. Most of the time, these computer-based systems work just as they should. But occasionally they do something horribly wrong because of either a benign failure or a malicious attack.

This book explores the security of computers, their data, and the devices and objects to which they relate. Our goal is to help you understand not only the role computers play but also the risks we take in using them. In this book, you will learn about some of the ways computers can fail—or be made to fail—and how to protect against (or at least mitigate the effects of) those failures. We begin that exploration the way any good reporter investigates a story: by answering basic questions of what, who, why, and how.

1.1 What Is Computer Security?

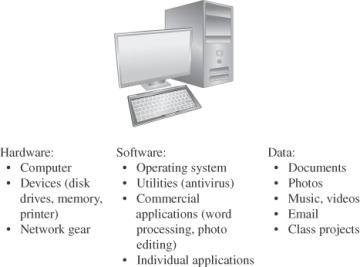

Computer security is the protection of items you value, called the assets of a computer or computer system. There are many types of assets, involving hardware, software, data, people, processes, or combinations of these. To determine what to protect, we must first identify what has value and to whom.

A computer device (including hardware and associated components) is certainly an asset. Because most computer hardware is pretty useless without programs, software is also an asset. Software includes the operating system, utilities, and device handlers; applications such as word processors, media players, or email handlers; and even programs that you have written yourself.

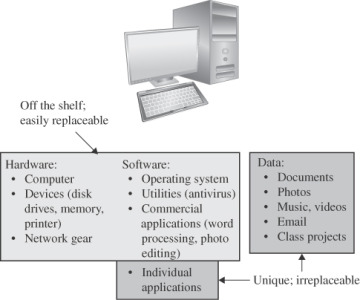

Much hardware and software is off the shelf, meaning that it is commercially available (not custom-made for your purpose) and can be easily replaced. The thing that usually makes your computer unique and important to you is its content: photos, tunes, papers, email messages, projects, calendar information, ebooks (with your annotations), contact information, code you created, and the like. Thus, data items on a computer are assets too. Unlike most hardware and software, data can be hard—if not impossible—to recreate or replace. These assets are all shown in Figure 1-2.

FIGURE 1.2 Computer Objects of Value

These three things—hardware, software, and data—contain or express your intellectual property: things like the design for your next new product, the photos from your recent vacation, the chapters of your new book, or the genome sequence resulting from your recent research. All these things represent a significant endeavor or result, and they have value that differs from one person or organization to another. It is that value that makes them assets worthy of protection. Other aspects of a computer-based system can be considered assets too. Access to data, quality of service, processes, human users, and network connectivity deserve protection too; they are affected or enabled by the hardware, software, and data. So in most cases, protecting hardware, software, and data (including its transmission) safeguards these other assets as well.

Computer systems—hardware, software and data—have value and deserve security protection.

In this book, unless we specifically call out hardware, software, or data, we refer to all these assets as the computer system, or sometimes as just the computer. And because so many devices contain processors, we also need to think about the computers embedded in such variations as mobile phones, implanted pacemakers, heating controllers, smart assistants, and automobiles. Even if the primary purpose of a device is not computing, the device’s embedded computer can be involved in security incidents and represents an asset worthy of protection.

Values of Assets

After identifying the assets to protect, we next determine their value. We make value-based decisions frequently, even when we are not aware of them. For example, when you go for a swim, you might leave a bottle of water and a towel on the beach but not a wallet or cell phone. The difference in protection reflects the assets’ value to you.

Indeed, the value of an asset depends on its owner’s or user’s perspective. Emotional attachment, for example, might determine value more than monetary cost. A photo of your sister, worth only a few cents in terms of computer storage or paper and ink, may have high value to you and no value to your roommate. Other items’ values may depend on their replacement cost, as shown in Figure 1-3. Some computer data are difficult or impossible to replace. For example, that photo of you and your friends at a party may have cost you nothing, but it is invaluable because it cannot be recreated. On the other hand, the DVD of your favorite film may have cost a significant amount of your money when you bought it, but you can buy another one if the DVD is stolen or corrupted. Similarly, timing has bearing on asset value. For example, the value of the plans for a company’s new product line is very high, especially to competitors. But once the new product is released, the plans’ value drops dramatically.

FIGURE 1.3 Values of Assets

Assets’ values are personal, time dependent, and often imprecise.

The Vulnerability–Threat–Control Paradigm

The goal of computer security is to protect valuable assets. To study different protection mechanisms or approaches, we use a framework that describes how assets may be harmed and how to counter or mitigate that harm.

A vulnerability is a weakness in the system—for example, in procedures, design, or implementation—that might be exploited to cause loss or harm. For instance, a particular system may be vulnerable to unauthorized data manipulation because the system does not verify a user’s identity before allowing data access.

A vulnerability is a weakness that could be exploited to cause harm.

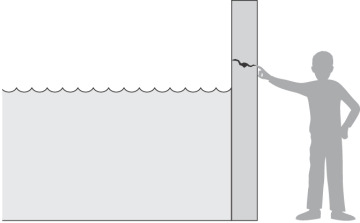

A threat to a computing system is a set of circumstances that has the potential to cause loss or harm. To see the difference between a threat and a vulnerability, consider the illustration in Figure 1-4. Here, a wall is holding water back. The water to the left of the wall is a threat to the man on the right of the wall: The water could rise, overflowing onto the man, or it could stay beneath the height of the wall, causing the wall to collapse. So the threat of harm is the potential for the man to get wet, get hurt, or be drowned. For now, the wall is intact, so the threat to the man is unrealized.

FIGURE 1.4 Threat and Vulnerability

A threat is a set of circumstances that could cause harm.

However, we can see a small crack in the wall—a vulnerability that threatens the man’s security. If the water rises to or beyond the level of the crack, it will exploit the vulnerability and harm the man.

There are many threats to a computer system, including human-initiated and computer-initiated ones. We have all experienced the results of inadvertent human errors, hardware design flaws, and software failures. But natural disasters are threats too; they can bring a system down when your apartment—containing your computer—is flooded or the data center collapses from an earthquake, for example.

A human who exploits a vulnerability perpetrates an attack on the system. An attack can also be launched by another system, as when one system sends an overwhelming flood of messages to another, virtually shutting down the second system’s ability to function. Unfortunately, we have seen this type of attack frequently, as denial-of-service attacks deluge servers with more messages than they can handle. (We take a closer look at denial of service in Chapter 6, “Networks.”)

How do we address these problems? We use a control or countermeasure as protection. A control is an action, device, procedure, or technique that removes or reduces a vulnerability. In Figure 1-4, the man is placing his finger in the hole, controlling the threat of water leaks until he finds a more permanent solution to the problem. In general, we can describe the relationship among threats, controls, and vulnerabilities in this way:

Controls prevent threats from exercising vulnerabilities.

A threat is blocked by control of a vulnerability.

Before we can protect assets, we need to know the kinds of harm we must protect them against. We turn now to examine threats to valuable assets.